Content

AI Fluency

Loop Thinking: How to Make AI Do Complex Work Consistently

If you have Claude Enterprise or ChatGPT Enterprise at work, there's a technique from the leading edge of AI coding that can solve your problems with inconsistent AI outputs in Excel, Word, and PowerPoint.

It's called looping, and it's a mentality which changes the fundamental relationship between you and an AI agent.

The default way people prompt AI is broken for complex work

Most people approach AI tools with a set of directions that sound like errands, where to go and in what order to do so. Process is a good start, but most don't finish the job by defining what done looks like at each stop.

"Make me a line chart of monthly volume using the attached. Add a stacked bar for SKU mix. Put a data table underneath, then write a narrative for a board presentation"

This works fine for simple tasks. But for anything with real analytical complexity — financial models, multi-sheet workbooks, reports where the numbers need to tie out — you end up in a frustrating cycle: the agent gives you something, you spot errors, you describe fixes, the agent introduces new errors.

The core problem is that if you prompt incorrectly, you'll find yourself acting as the quality control layer at the wrong point in the loop that you are creating. When every part of the loop requires your eyeballs, your verification, your patience, your way of using AI doesn't scale.

Flip the model: define what "done" looks like and get out of the loop

In the most ambitious AI coding setups, developers don't tell agents to "write a function that does X." They write tests (specific conditions the code must satisfy) and let the agent "loop" without them to create a solution against those tests until they all pass. The agent writes code, runs the tests, sees what failed, fixes it, and runs again. If the tests fail, there's no need to notify the user, so the developer only sees the output when it's actually correct.

You can do the exact same thing with enterprise AI tools and your business data, and you don't need to know any code.

Here's what that looks like in practice. Instead of describing the steps to build an Excel workbook, you describe the validation criteria the workbook must meet, and ask for a formula-driven grid that validates the tests are passing.

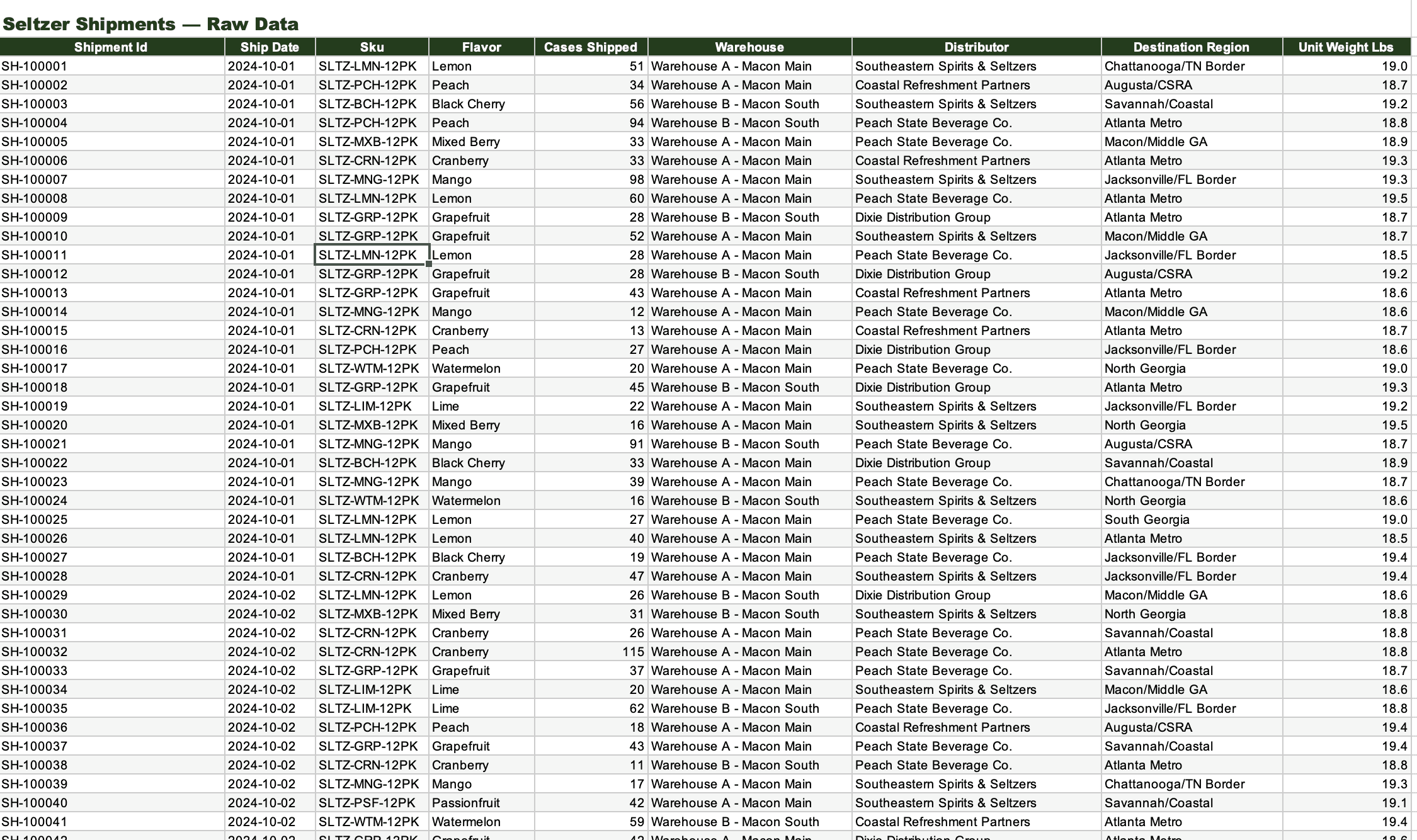

A worked example: Seltzer shipment analysis

Say I have a booming seltzer enterprise, with 18 months of shipment data across 10 flavors. Let's also say I need a workbook with a monthly volume trend and a SKU mix breakdown.

The typical prompt would describe to AI what charts to make. A prompt focused on putting me in the right part of the loop describes what must be true about the output before I am responded to by the agent:

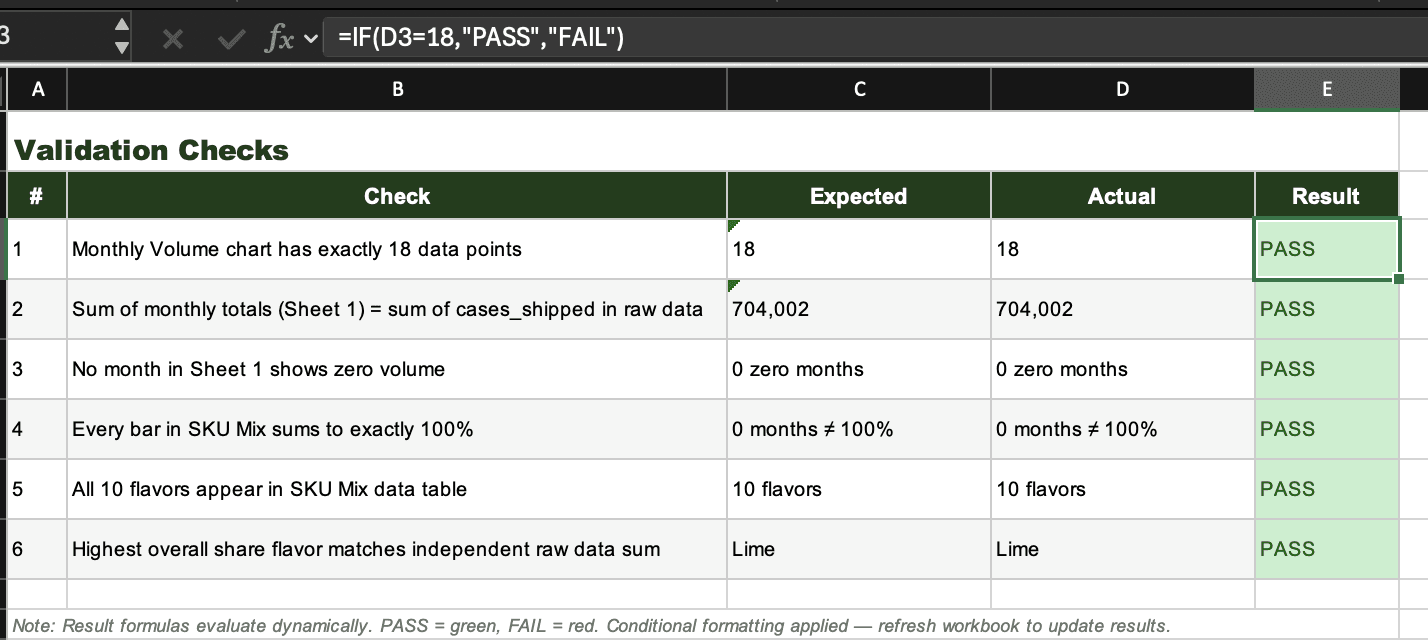

The monthly volume chart must have exactly 18 data points

The sum of monthly totals must equal the sum of all individual cases shipped in the raw data

No month can show zero volume

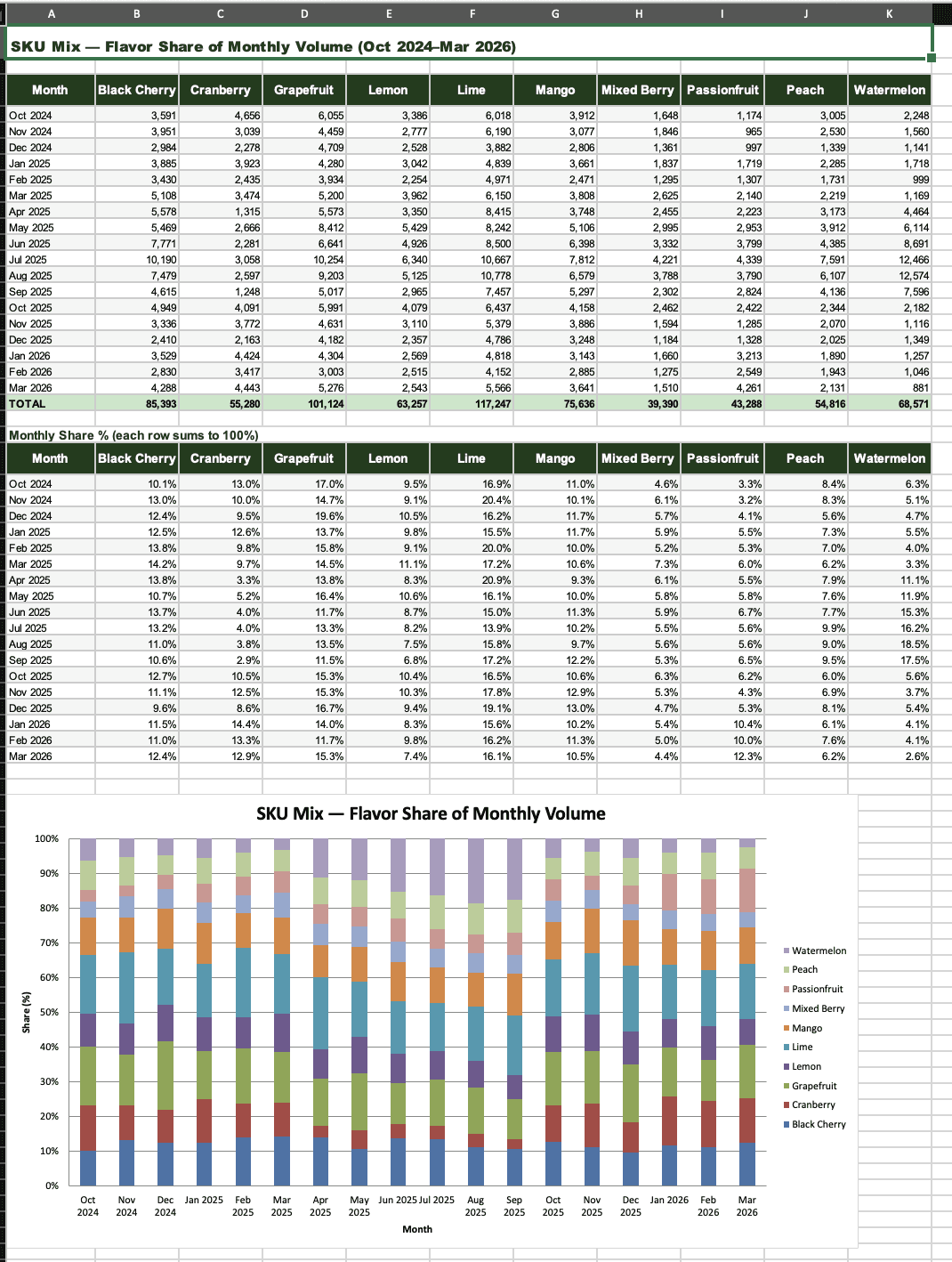

Every bar in the SKU mix chart must sum to exactly 100%

All 10 flavors must appear in the legend and data table

The highest-share flavor in the chart must match an independent calculation from the raw data

Then you add: "Log pass/fail on a Validation sheet. If any check fails, fix it before returning. Only return when all six pass."

This is the key shift. The agent now has a computer — Claude and ChatGPT Enterprise both provide a sandboxed environment for working with your files — and it will write scripts, run calculations, check its own work, and iterate until everything passes. It predicts the next token in a problem-solving loop until the checks clear, then it predicts the next token in a response back to you.

By defining validation steps, you don't see the intermediate failures the probability model might make. You just see the finished, validated product, formatted in a way that you can quickly review.

Tip: Use AI to help you come up with the validation criteria!

How the inspection step changes

Looping doesn't mean blind trust. The opposite, actually. Once you get the output, you inspect the validation itself:

Open the README — could a coworker follow this cold?

Break a formula — change an input value and see if totals and charts follow

Delete a flavor's rows from the raw data — does validation catch the discrepancy?

Open the Validation sheet — did it compute checks dynamically, or just hardcode "pass"?

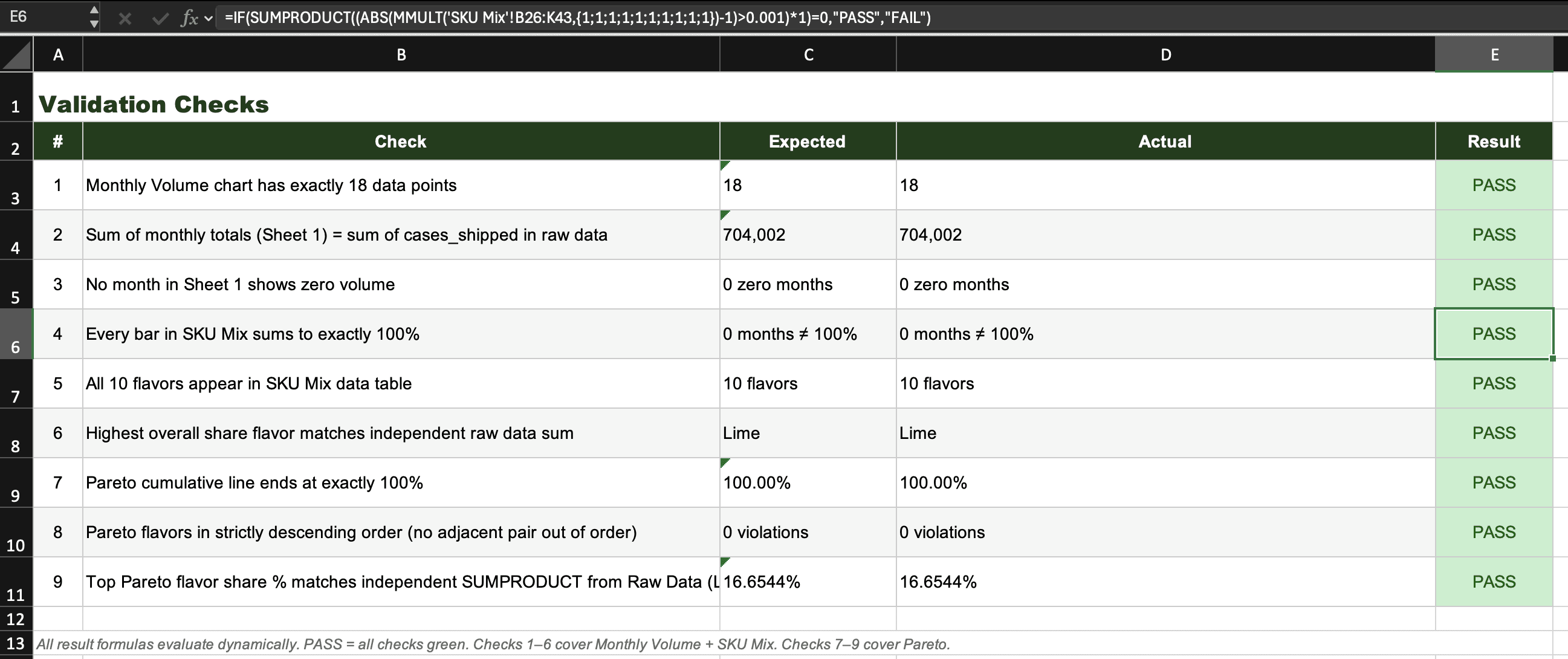

Next, add criteria and re-run

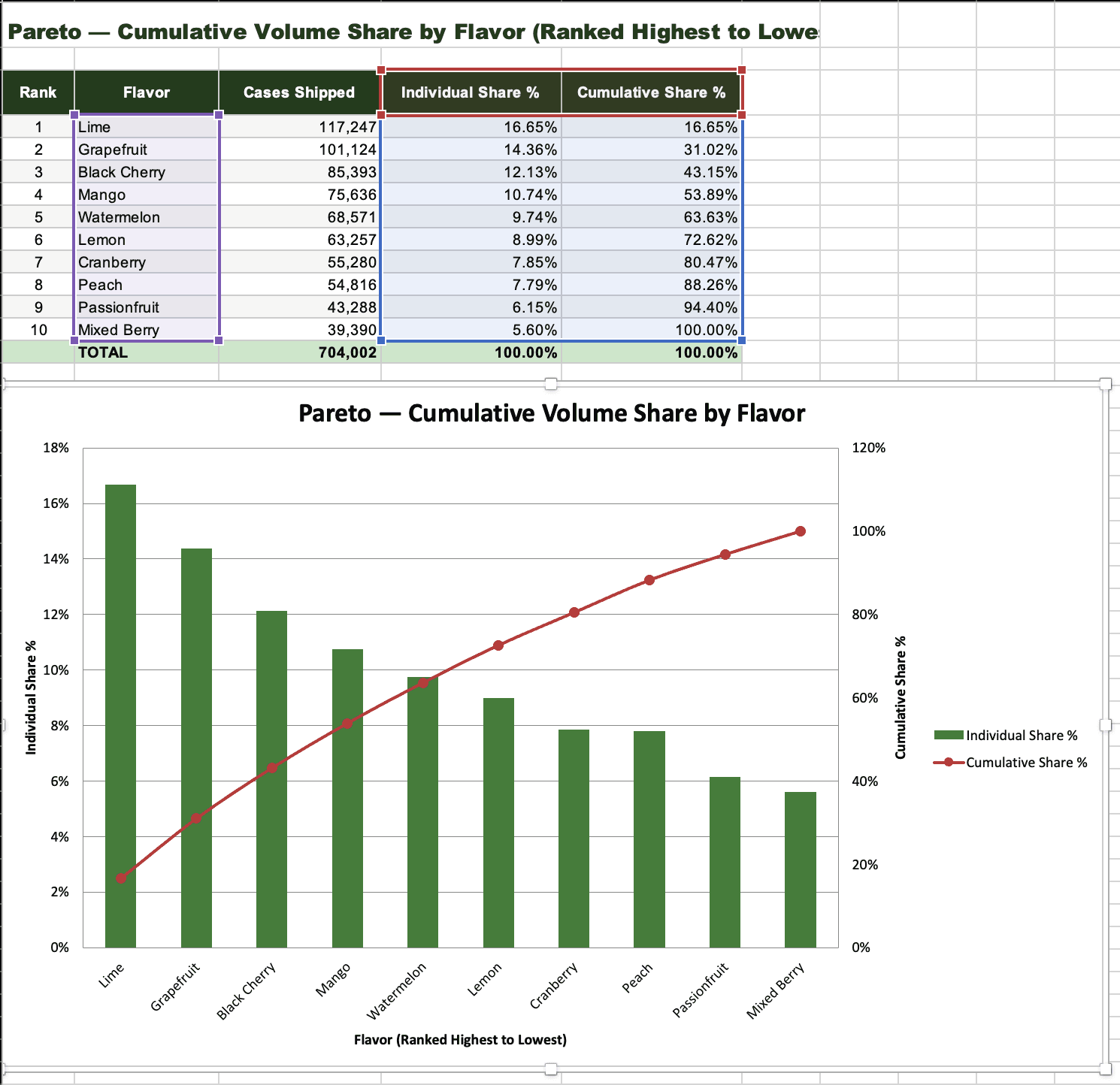

Need a Pareto analysis added? Don't start over with a new conversation. Add new validation checks — the cumulative line must end at exactly 100%, flavors must be in strictly descending order, the top flavor's percentage must match an independent calculation — and let the agent loop against all nine checks to produce the next version.

Your validation set grows with the workbook, and will make it easier to change in future conversations.

Every validation you add makes the next iteration more reliable, and every iteration should save you time. When you reach this point, continue to add validations that let you check less and less as new information enters your model, or add validations that expand the analysis into other valuable slices.

The mental shift that unlocks this

When people ask how I work with AI, they often assume I trust it. I want to flip that assumption. I don't trust AI at all, and designing for the inevitable mistakes that probability models will make is precisely what makes top users more efficient.

There's nothing wrong with prompts that don't use this technique, and not every interaction needs this approach!

But if you're using AI for long-form analysis in Excel, Word, or PowerPoint and seeing variability in how well it completes your tasks, try thinking in terms of: what checks can the agent complete on its own before it comes back to me?

Let the agent loop on the menial. You loop on the valuable.

Try it yourself

I've put together a one-page handout covering the three-step pattern and a sample dataset you can use to practice. Download them below.

If this feels like the type of training that would spark AI fluency on your team, reach out — rivers@cornelsonadvisory.com.